About cookies on this site Our websites require some cookies to function properly (required). In addition, other cookies may be used with your consent to analyze site usage, improve the user experience and for advertising. For more information, please review your options. By visiting our website, you agree to our processing of information as described in IBM’sprivacy statement. To provide a smooth navigation, your cookie preferences will be shared across the IBM web domains listed here.

Tutorial

Analyzing and forecasting with time series data using ARIMA models in R

Create and assess ARIMA models using R on watsonx.ai

The ARIMA algorithm (ARIMA stands for Autoregressive Integrated Moving Average) is used for time series analysis and for forecasting possible future values of a time series. Autoregressive modeling and Moving Average modeling are two different approaches to forecasting time series data. ARIMA integrates these two approaches, hence the name. ARIMA is one of the most widely used approaches to time series forecasting and it can be used in different ways depending on the type of time series data that you're working with.

Forecasting is a branch of machine learning using the past behavior of a time series to predict the one or more future values of that time series. Imagine that you're buying ice cream to stock a small shop. If you know that sales of ice cream have been rising steadily as the weather warms, you should probably predict that next week's order should be a little bigger than this week's order. How much bigger should depend on the amount that this week's sales differ from last week's sales. We can't forecast the future without a past to which to compare it, so past time series data is very important for ARIMA and for all forecasting and time series analysis methods.

In this tutorial we'll use what's known as the Box-Jenkins method of forecasting. The approach starts with the assumption that the process that generated the time series can be approximated using an ARMA model if it is stationary. This method consists of four steps:

Identification: Assess whether the time series is stationary, and if not, how many differences are required to make it stationary. Then, generate differenced data for use in diagnostic plots. Identify the parameters of an ARIMA model for the data from auto-correlation and partial auto-correlation.

Estimation: Use the data to train the parameters (that is, the coefficients) of the model.

Diagnostic Checking: Evaluate the fitted model in the context of the available data and check for areas where the model can be improved. In particular, this involves checking for overfitting and calculating the residual errors.

Forecasting: Now that you have a model, you can begin forecasting values with your model.

In this tutorial you'll learn about how ARIMA models can help you analyze and create forecasts from time series data. You'll learn how to create and assess ARIMA models using R in a Jupyter notebook on IBM watsonx.ai platform with a data set imported from the FPP library, one of the canonical time series libraries in R.

Prerequisites

You need an IBM Cloud account to create a watsonx.ai project.

Steps

Step 1. Set up your environment

While you can choose from several tools, this tutorial walks you through how to set up an IBM account to use a Jupyter Notebook. Jupyter Notebooks are widely used within data science to combine code, text, images, and data visualizations to formulate a well-formed analysis.

Log in to watsonx.ai using your IBM Cloud account.

Create a watsonx.ai project.

Create a Jupyter Notebook using the R Kernel.

This step will open a Notebook environment where you can load your data set and copy the code from this tutorial to perform the time series analysis and ARIMA modeling.

Step 2: Install and import libraries

We’ll use two libraries for creating our ARIMA models. First, the forecast package, which is a library containing methods and tools for displaying and analyzing univariate time series forecasts including exponential smoothing via state space models and automatic ARIMA modeling. Second, the fpp2 library, which contains test data for us to explore. Finally, the tseries library which is a library containing utilities for series analysis and computational finance.

library(forecast)

library(fpp2)

library(tseries)

Step 3: Load the data and do EDA

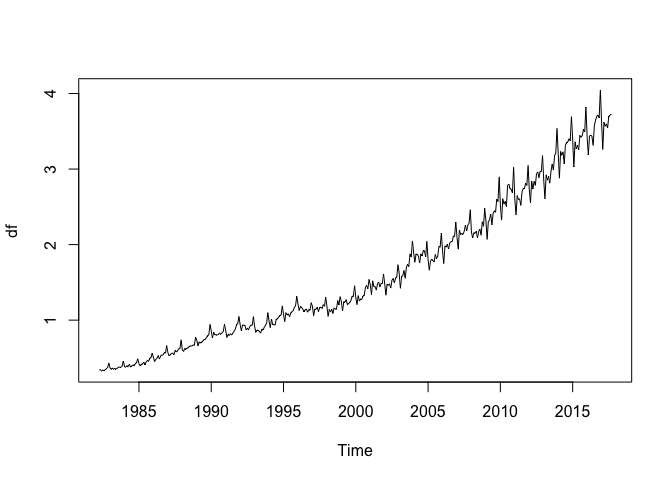

Load our data. We’ll use the auscafe data set from the forecast library. This data set contains monthly Australian take-away food expenditure from 1980 to 2019.

df <- auscafe

plot(df)

We can see that this data exhibits seasonality and has a strong trend line, both of which will be interesting to model using ARIMA.

Before we start estimating what kind of ARIMA model to use, we should do some quick exploratory data analysis (EDA). We can use the is.ts method to ensure that our data set is indeed a time series:

is.ts(df)

Because our data set is in fact a time series data set, we can proceed with ARIMA modeling.

Step 4: Check stationarity and variance

Now, we need to establish whether our time series model is stationary or not. ARIMA models can handle cases where the non-stationarity is due to a unit-root but may not work well at all when non-stationarity is of another form.

There are three basic criteria for a series to be classified as stationary series. First, the mean of the series should not be a function of time, this means that the mean should be roughly constant though some variance can be modeled. Second, the variance of the series should not be a function of time. This property is known as homoscedasticity. Third, the covariance of the terms, for example the kth term and the (k + n)th term, should not be a function of time.

Essentially a stationary time series is a flat looking series, without trend, constant variance over time, a constant autocorrelation structure over time, and no periodic fluctuations. It should resemble white noise as closely as possible. It’s fairly evident that our time series is non-stationary so our model will likely need to reflect that.

We can confirm that our time series is non-stationary by using two tests: the Augmented Dickey-Fuller (ADF) test and the Kwiatkowski-Phillips-Schmidt-Shin (KPSS) test. First, the ADF test. This is a type of statistical test called a unit root test. The ADF test is conducted with the following assumptions:

- The null hypothesis for the test is that the series is non-stationary, or series has a unit root.

- The alternative hypothesis for the test is that the series is stationary, or series has no unit root.

If the null hypothesis cannot be rejected, then this test may provide evidence that the series is non-stationary. This means that when you run the ADF test, if the test statistic is less than the critical value and p-value is less than 0.05, then we reject the null hypothesis, which means the time series is stationary.

adf.test(df)

The ADF test here returns a p-value of 0.8879 which indicates that the time series is not stationary and has a unit root. Luckily, the ARIMA model specification can handle time series data which contains a unit root. If the non-stationarity came from another source then we might difference the data using the diff method.

Next, we can identify the trend and the seasonality components of our time series by using the decompose method.

decomp <- decompose(df)

autoplot(decomp)

This returns a visualization of the decomposition.

We can see that our time series contains trend and seasonal components but also that the variance of the time series is increasing over time. ARIMA models handle seasonality and non-stationarity well but they don't handle changes in variance or heteroskedasticity especially well.

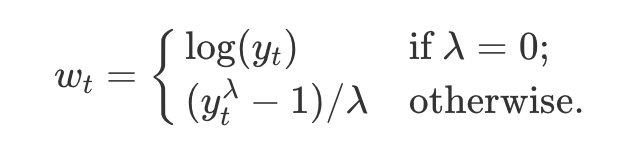

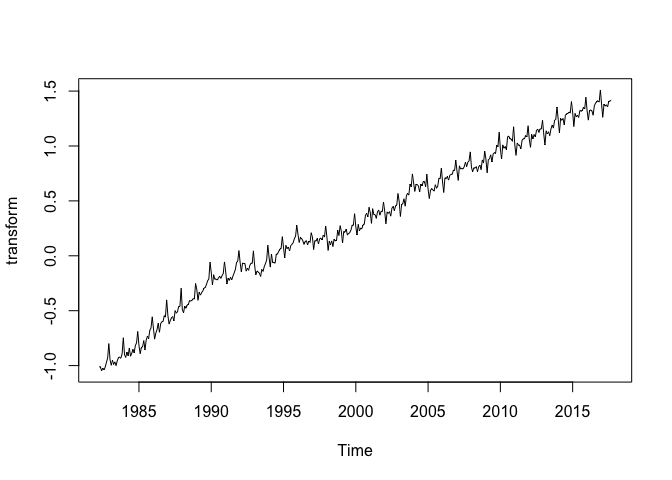

When we encounter heteroskedasticity in a time series it's common to take a log transformation to manage it. One tool for doing this is the Box-Cox transformation. The BoxCox() method takes a lambda parameter which is defined as follows:

This lambda value is passed to the BoxCox() method to remove the excess variance from the time series through a log transform:

lambda <- BoxCox.lambda( df )

transform <- BoxCox(df, lambda)

Now, we can plot our detrended time series.

plot(transform)

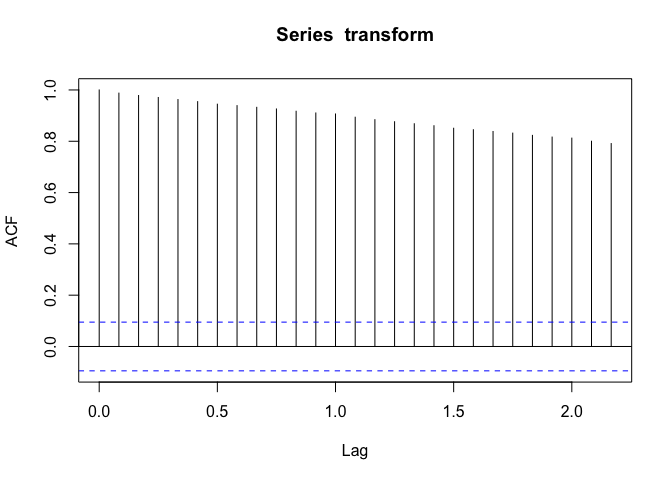

Step 5: Plot Autocorrelations

Next, we need to check to see where our de-trended time series data contains autocorrelations. We can see the degree to which a time series is correlated with its past values by calculating the auto-correlation. Calculating the autocorrelation can answer questions about whether the data exhibit randomness and how related one observation is to an immediately adjacent observation. This can give us a sense of what sort of model might best represent the data. The autocorrelations are often plotted to see the correlation between the points up to and including the lag unit. There are two approaches to autocorrelations: the autocorrelation function (ACF) and the partial autocorrelation function (PACF).

In ACF, the correlation coefficient is in the x-axis whereas the number of lags (referred to as the lag order) is shown in the y-axis. In R we can use the acf function to create an autocorrelation plot. The number of lags can be tuned with the lag.max parameter.

acf(transform)

This indicates that our data points are strongly auto-correlated and thus will probably use an AR component, perhaps with a higher order.

It is also a good idea to check the PACF as well. A partial correlation is a conditional correlation. As an example, think of a time series where the time series contains values at Xt, Xt+1, and Xt+2. The partial correlation between y and Xt+2 is the correlation between the variables determined taking into account how both y and Xt+2 are related to Xt and Xt+1.

The PACF is helpful in determining the order of the AR part of the ARIMA model. It is also useful to determine or validate how many seasonal lags to include in the forecasting equation of a moving average based forecast model for a seasonal time series. This is called the seasonal moving average (SMA) order of the process.

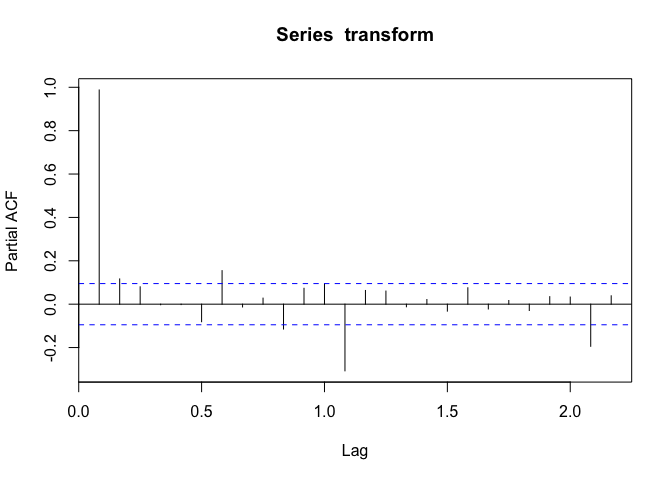

pacf(transform)

We can see that the values of the PACF plot drop off quickly after the 1.0 lag, indicating that lags after 1.0 are less significant.

A rough rubric for when to use AR terms in the model is when:

- ACF plots show autocorrelation decaying towards zero.

- A PACF plot cuts off quickly towards zero.

- An ACF plot of a stationary series shows positive at lag 1.

A rough rubric for when to use MA terms in the model is when:

- The ACF is negatively autocorrelated at lag 1.

- An ACF that drops sharply after a few lags.

- A PACF decreases gradually rather than suddenly.

We can see from our two plots that our ACF plot shows a positive value at Lag 1 and that the PACF cuts off somewhat quickly. This might indicate that we should use an AR term in our model.

Step 6: Train an ARIMA model

Now that we have a sense of what kinds of ARIMA model might represent our data well, we can begin training a model. We'll create a train data set of 80% of our data and a test data set of 20% of our data.

train <- head(transform, round(length(transform) * 0.8))

h <- length(transform) - length(train)

test <- tail(transform, h)

Now it’s time to actually train several models to compare their performance on our test data. First, we can call the arima function without a seasonal component, which has the following parameters:

arima(dataset, (p,d,q))

Where p is the order of the autoregressive term, d is degree of differencing, and q is the number of moving average terms.

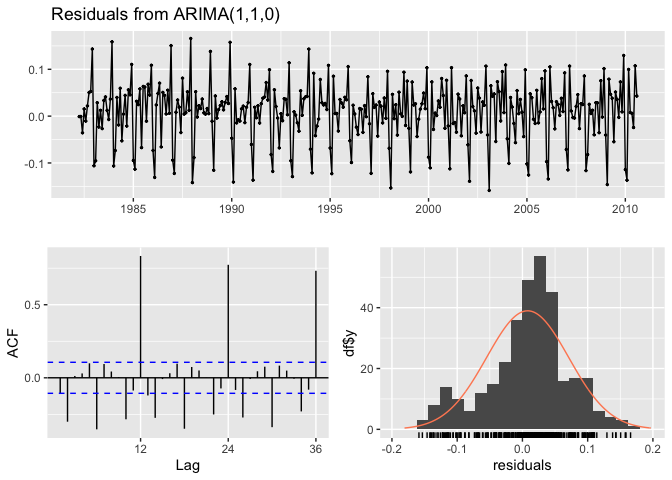

Because we saw characteristics in the ACF and PACF plots that might indicate an AR model, we'll create a first-order autoregressive model. We use the checkresiduals function to generate graphs of the residuals, the ACF, and the distribution of the residuals. We'll use an autoregressive term and a first difference term because our data exhibits non-stationarity:

non_seasonal_model <- arima(train, order = c(1, 1, 0))

non_seasonal_model

This returns:

Call:

arima(x = train, order = c(1, 1, 0))

Coefficients:

ar1

-0.3039

s.e. 0.0516

sigma^2 estimated as 0.004022: log likelihood = 455.22, aic = -906.44

Here, we see the coefficients for the model specification and some of the calculated statistics for it:

- sigma^2: this represents the variance of the residual values, lower is better

- log likelihood: this is maximum likelihood estimation for the ARIMA model, higher is better

- aic: a measure of the goodness of fit and the parsimony of the model, lower is better

checkresiduals(non_seasonal_model)

The residuals here look constant, but the ACF plot shows that we have a strong seasonal component to address. To do this, we’ll create a seasonal ARIMA model. We write SARIMA as:

ARIMA(p,d,q)(P, D, Q)m,

Where p is the order of the autoregressive term, d is the degree of differencing, q is the number of moving average terms, m refers to the number of periods in each season, and (P, D, Q ) represents the (p,d,q) for the seasonal part of the time series.

The seasonal autoregressive component captures the relationship between the current observation and a certain number of lagged observations at seasonal intervals.

The seasonal differencing takes into account the seasons and differences of the current value and its value in the previous season. For instance the difference for the month may would be value in May 2018 minus the value in May 2017.

The component moving average captures the relationship between the current observation and the residual errors from a moving average model applied to lagged observations at seasonal intervals.

Since the PACF plot indicates that the autocorrelations between plots drop off quickly, we might believe that the differences between the seasonal components of the time are down to noise and an autocorrelation. A model that has both an autoregressive term and a moving average term is called an ARMA model. This would indicate an MA term in the seasonal component:

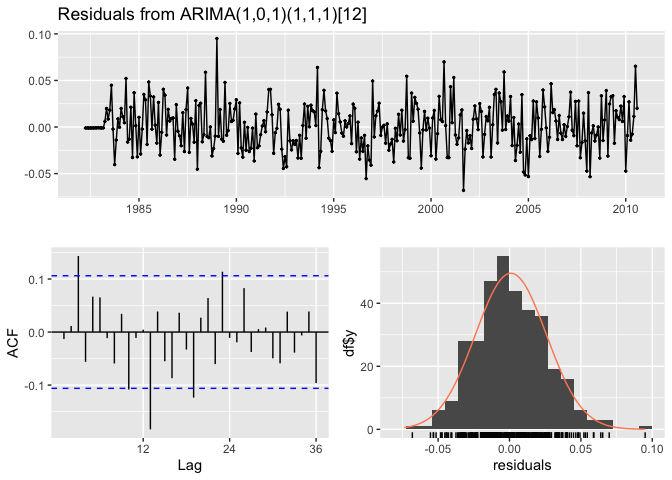

seasonal_model <- arima(train, order=c(1,0,1), seasonal=c(1,1,1))

checkresiduals(seasonal_model)

This seems to show a much better fit to our data, the ACF plot no longer shows the seasonality as strongly as the previous model. We’ll examine statistical ways to compare fitted models in the next section.

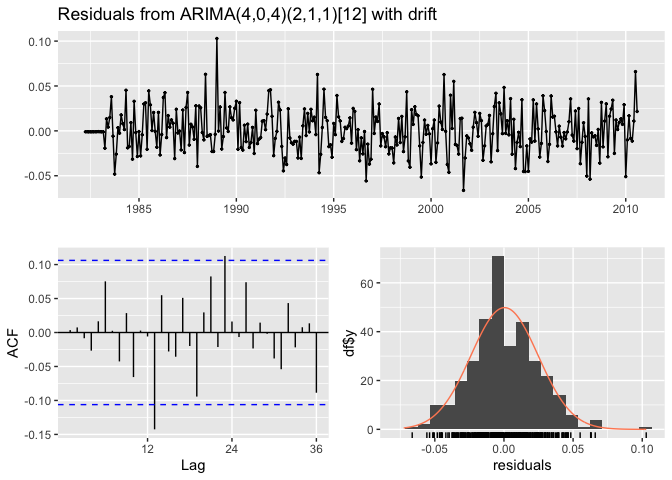

Finally, we can use the auto.arima function to train a model based on a search of parameters and comparison of the Akaike Information Criteria (or AIC) of each model. We simply pass the data to auto.arima and the best model will be returned:

auto_model <- auto.arima(train)

checkresiduals(auto_model)

We can see that the auto-ARIMA returns a model of ARIMA(4,0,4)(2,1,1) [12]. That is, a moving average model with an autoregressive component, a seasonal autoregressive component, a seasonal differencing component, and a seasonal moving average, with a seasonal period of 12 time steps.

Now we have three models to compare.

Step 7: Compare the models

The first way to compare models is to examine the AIC of each model. In this case, a lower AIC is better.

print(paste("non seasonal fit :", non_seasonal_model$aic))

print(paste("seasonal fit :", seasonal_model$aic))

print(paste("auto-arima fit :", auto_model$aic))

This returns:

[1] "non seasonal fit : -906.435866288605"

[1] "seasonal fit : -1458.96047140238"

[1] "auto-arima fit : -1461.18386865639"

The auto-ARIMA method calculates a corrected AIC called the AICC as well, which can be more accurate for model comparison, but the other two do not, so we'll stick with the AIC to compare. The Bayesian Information Criterion or BIC is also sometimes used to compare models.

Our seasonal models fit the data much better than our non-seasonal model, which is unsurprising. We can also forecast multiple predictions using the forecast method. We pass the model that we would like to use for forecasting and the number of time periods to forecast.

forecast1=forecast(non_seasonal_model, h = 48)

forecast2=forecast(seasonal_model, h = 48)

forecast3=forecast(auto_model, h = 48)

Once we’ve created 3 forecasts we can see the output from each of them and compare them to the test period:

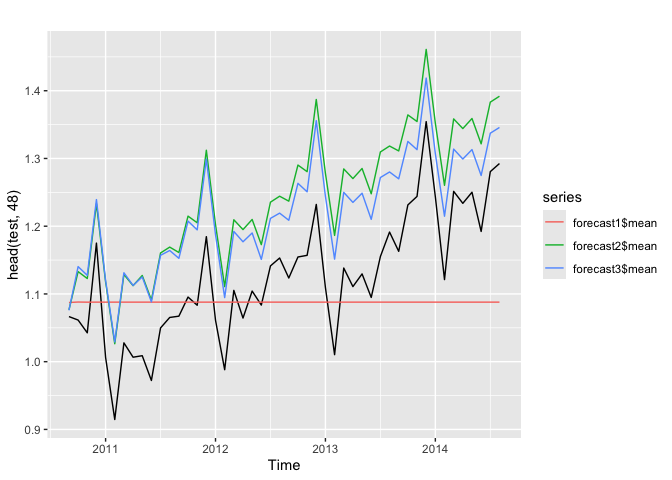

autoplot(head(test, 48)) +

autolayer(forecast1$mean) +

autolayer(forecast2$mean) +

autolayer(forecast3$mean)

We can see in this visualization that the predicted values that the simple non-seasonal model fails to catch any of the seasonal variation and quickly reverts to predicting the mean. The two seasonal models tend to overpredict the value of the trend, how much the seasonal values will increase relative to their previous values. The error terms for the models are increasing steadily throughout the forecast period.

forecast::accuracy(forecast1$mean, test)

forecast::accuracy(forecast2$mean, test)

forecast::accuracy(forecast3$mean, test)

This returns:

ME RMSE MAE MPE MAPE ACF1 Theils U

Forecast1 0.03696918 0.09968117 0.07870493 2.63047 6.81939 0.6479524 1.29379

Forecast2 -0.1169742 0.1205266 0.1169742 -10.4623 10.4623 0.6152354 1.630191

Forecast3 -0.09367093 0.09715293 0.09367093 -8.473249 8.473249 0.5519822 1.336785

The RMSE for our auto-arima model is only slightly better than our flatlined non-seasonal model but if we forecast a larger time window, we'd see that improve. Still, this shows some of the limitations of ARIMA forecasting.

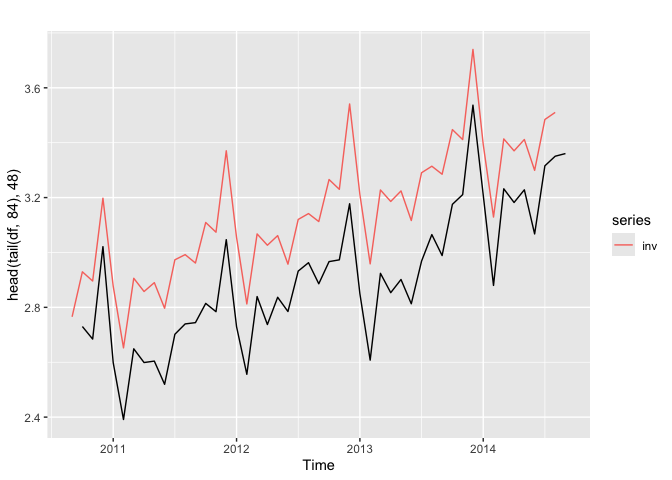

Because our data has been transformed we may be interested in an untransformed version of the forecast for comparison. We would use the InvBoxCox method to reverse the Box-Cox transformation and get non-transformed values:

inv <- InvBoxCox(forecast3$mean, BoxCox.lambda( df ))

autoplot(head(tail(df, 84), 48)) + autolayer(inv)

This gives us the untransformed test data and untransformed prediction.

Summary and next steps

In this tutorial, we've learned about the ARIMA algorithm and how we can use it to forecast time series data. We learned to test for stationarity and homoskedasticity in a time series and how to transform a time series. We fit multiple ARIMA models and compared them using the AIC metric and forecasts. You learned about the Auto-ARIMA method to generate a model by comparing values of d to mitigate the stationarity and values of p and d to minimize AIC.

Try watsonx for free

Build an AI strategy for your business on one collaborative AI and data platform called IBM watsonx, which brings together new generative AI capabilities, powered by foundation models, and traditional machine learning into a powerful platform spanning the AI lifecycle. With watsonx.ai, you can train, validate, tune, and deploy models with ease and build AI applications in a fraction of the time with a fraction of the data.

Try watsonx.ai, the next-generation studio for AI builders.

Next steps

Explore more articles and tutorials about watsonx on IBM Developer.