About cookies on this site Our websites require some cookies to function properly (required). In addition, other cookies may be used with your consent to analyze site usage, improve the user experience and for advertising. For more information, please review your options. By visiting our website, you agree to our processing of information as described in IBM’sprivacy statement. To provide a smooth navigation, your cookie preferences will be shared across the IBM web domains listed here.

Article

Proactive batch processing with AI in z/OS

Learn how AI in z/OS predicts workload spikes and adjusts batch initiators in advance to improve performance and efficiency

Archived content

Archive date: 2026-01-04

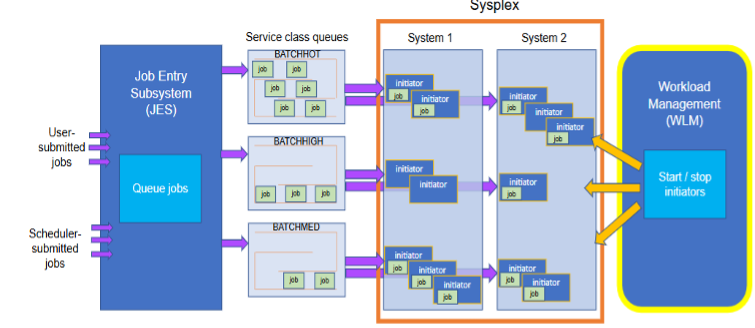

This content is no longer being updated or maintained. The content is provided “as is.” Given the rapid evolution of technology, some content, steps, or illustrations may have changed.AI-powered Workload management (WLM) batch initiator management in IBM z/OS version 3.1, or later uses artificial intelligence to predict workload spikes and adjust the number of batch initiators in advance. Traditional WLM starts initiators only after workloads arrive, which can cause delays when many jobs enter the input queue for a service class.

By analyzing and modeling recurring batch workloads, AI can predict spikes that happen at set times or intervals. This allows WLM to start initiators before the spike, reducing queue lengths and helping jobs start and finish sooner.

Each service class can meet its WLM policy goals more effectively by anticipating workload patterns that change between day and night, across days of the week, and for different service classes.

Comparison of batch workload management approaches

| Feature | Reactive batch workload management | Proactive AI-powered batch workload management |

|---|---|---|

| Initiator start time | After workload arrives | Before workload arrives |

| Workload queue size | Larger | Smaller |

| Goal achievement | May be delayed | Improved |

Key challenges addressed by AI in WLM batch initiator management

Reactive resource allocation: Traditional WLM starts initiators only after workloads arrive, causing delays. AI predicts spikes and adjusts initiators in advance, reducing wait times.

Manual fine-tuning: Managing spikes often needs manual changes and trial-and-error by system programmers. AI automates this work and reduces the need for specialized skills.

Meeting performance goals: Without proactive action, workloads may miss SLAs due to late resource allocation. AI prepares resources in advance, improving SLA compliance and system performance.

Handling complex workload patterns: Batch workloads may have irregular patterns, such as daily or weekly spikes mixed with unexpected submissions. AI uses historical data to predict these patterns and adjust resources accordingly.

Skills gap: Mainframe complexity has created a shortage of experts. AI solutions with pre-built models and simple interfaces reduce the need for deep workload management skills.

Resource utilization: Poor allocation can cause overuse or underuse of capacity. AI adjusts resources in real time to improve utilization and avoid bottlenecks.

By solving these challenges, AI improves efficiency, lowers operational effort, and supports business goals.

Main benefits of using AI in WLM batch initiator management

Proactive resource allocation: AI predicts workload spikes and adjusts batch initiators in advance so jobs start without delay.

Improved system performance: Shorter queues, less waiting, and faster response times help meet SLAs.

Simplified operations: Reduces the need for manual tuning, making workload management easier and closing the skills gap for new professionals.

Optimized resource use: Adjusts initiators based on workload patterns and real-time data to avoid overuse or underuse.

Flexible control modes: Users can switch between AI, non-AI, and simulation modes. Simulation mode shows predictions without taking action, helping build trust before full use.

No extra AI skills needed: Built-in models in z/OS allow use without additional AI or data science expertise.

These benefits improve efficiency, cut delays, and align system performance with business needs.

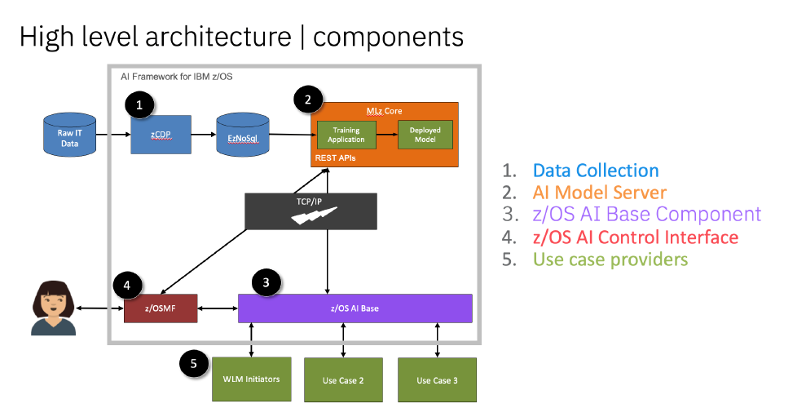

AI Framework for z/OS: How it works

Key components

Data collection – Collects operational data, such as System Management Facilities (SMF) records, for AI model training and prediction.

AI model training and deployment – Uses historical system data to build machine learning models for workload patterns, then deploys them in z/OS.

Inference and scoring – Applies trained models to real-time data to provide predictive insights for system management.

Monitoring and retraining – Checks model accuracy and updates them as needed to keep predictions reliable.

Example: AI-powered WLM batch initiator management

A key use of the AI framework is in WLM batch initiator management. WLM uses AI models trained on historical SMF data to predict workload surges. For example, if a system sees a spike in batch processing at a certain time, the AI model detects the pattern and prompts WLM to start more initiators in advance. This prepares the system for incoming workloads, improving performance and resource use.

The AI runs within z/OS, hiding the complexity of data science from administrators and users. This lets organizations benefit from AI without needing special AI skills.

To enable these capabilities, administrators configure the AI Framework in the z/OS Management Facility (z/OSMF). This includes setting up data collection, training models with system-specific data, and deploying them for predictions. Regular monitoring and retraining help keep the models accurate as workloads change.

With AI built into its core, z/OS 3.1, or later delivers an automated system management, improving efficiency and reducing operational effort.

Why it matters for Enterprises

Organizations that depend on batch processing, such as banks, insurance companies, and large enterprises, can gain major benefits from AI-powered WLM in z/OS 3.1, or later. It offers better scalability, adaptability, and efficiency for critical workloads.

This AI-driven approach reduces manual effort, boosts performance, and allocates resources dynamically. It sets a new standard for intelligent workload management.

Summary

The article explains how AI-powered WLM batch initiator management in z/OS predicts workload spikes and adjusts initiators in advance. It compares the traditional reactive method with the proactive AI approach, showing benefits such as shorter queues and better goal achievement. The AI Framework collects system data, trains models, and makes predictions to optimize resource allocation. A practical example is using AI to anticipate regular workload surges and start initiators early. This approach improves system performance, reduces manual work, and adapts to changing workload patterns.